When it comes to today’s AI systems, especially in Generative AI, the main challenge isn’t just building a basic system with, say, 70% accuracy—it’s about pushing that system to over 90% and making it reliable for real-world production. Optimizing RAG (Retrieval-Augmented Generation) systems is essential for reaching this level. Effective chunking, one of the foundational … Continue reading Optimizing RAG Systems: A Deep Dive into Chunking Strategies.

Tag: rag

RAG Components – 10,000 ft Level

When you look at GEN AI and specifically LLM's from a usage point of view, we have a few techniques to interact with LLM's. This depends on whether you need to interact with external data or just use LLMs for your different tasks. RAG is an important technique and widely adopted because it helps you … Continue reading RAG Components – 10,000 ft Level

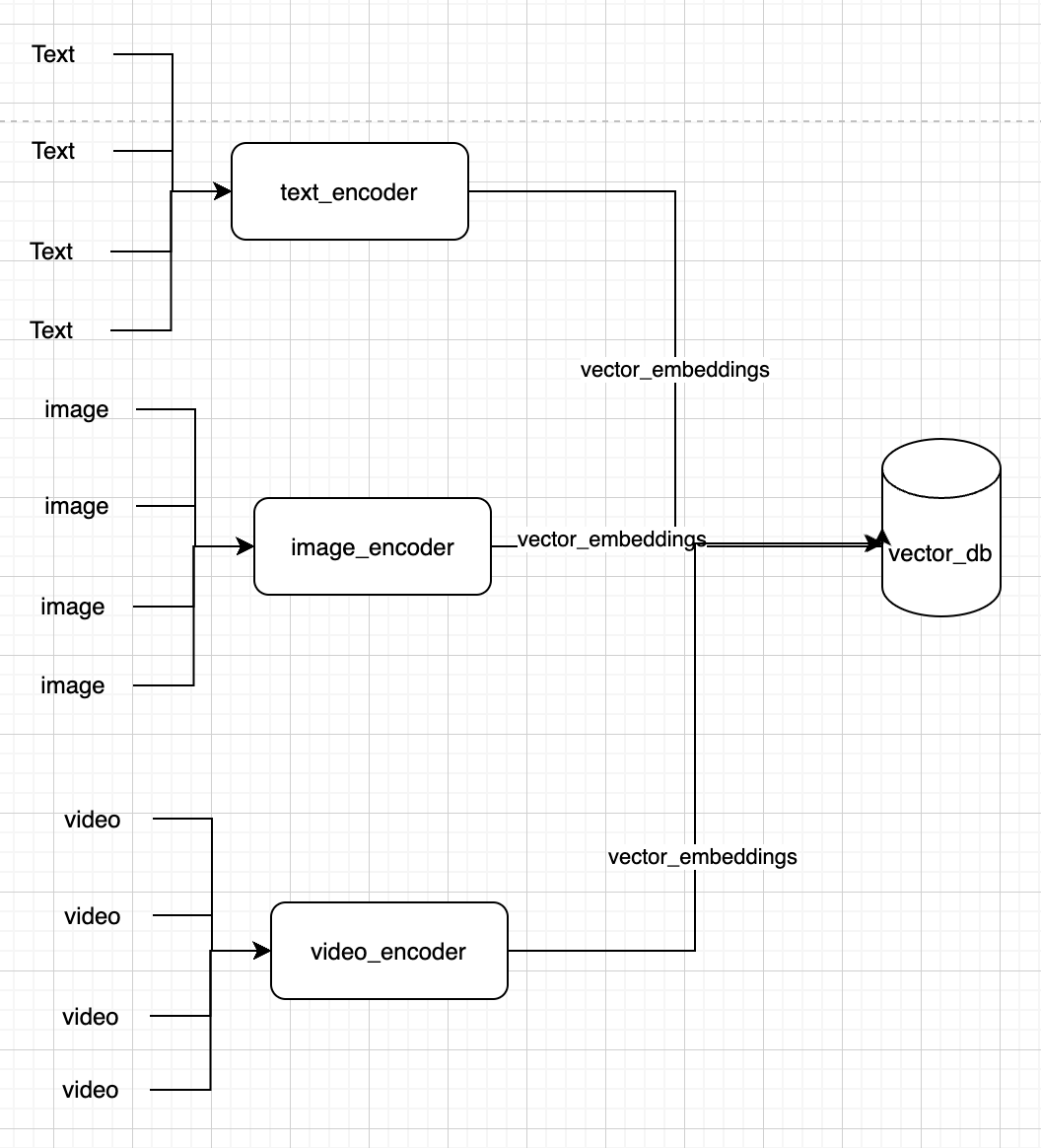

Experimenting With Multi Model Rag and Google Gemini

In the world of LLM's, rag has gained quite a bit of traction due to the fact that it helps reduce hallucination by giving access to LLM's to external sources and grounding the results. In simple words, RAG is basically proving an external data source for Generative AI models to get better context on user … Continue reading Experimenting With Multi Model Rag and Google Gemini

Understanding RAG and Vector DB

For anyone in the field of AI and ML, they probably do know all of these terms. But how do you explain these to someone new. Let's try understanding this. USE CASE: Mark wants to plan a trip to Georgia in Europe and he wants to use an LLM for this. INTERACTION WITH LLM: Attempt … Continue reading Understanding RAG and Vector DB