As GEN AI evolves, multi-agent systems (MASs) are gaining traction, yet many remain at the PoC stage. So this is where research papers and surveys help. Over the weekend, I explored their failure points and found a fascinating study worth sharing.

MASs promise enhanced collaboration and problem-solving, but ensuring consistent performance gains over single-agent frameworks remains challenging. A recent study categorizes failure modes in MASs, providing valuable insights into why they often fall short.

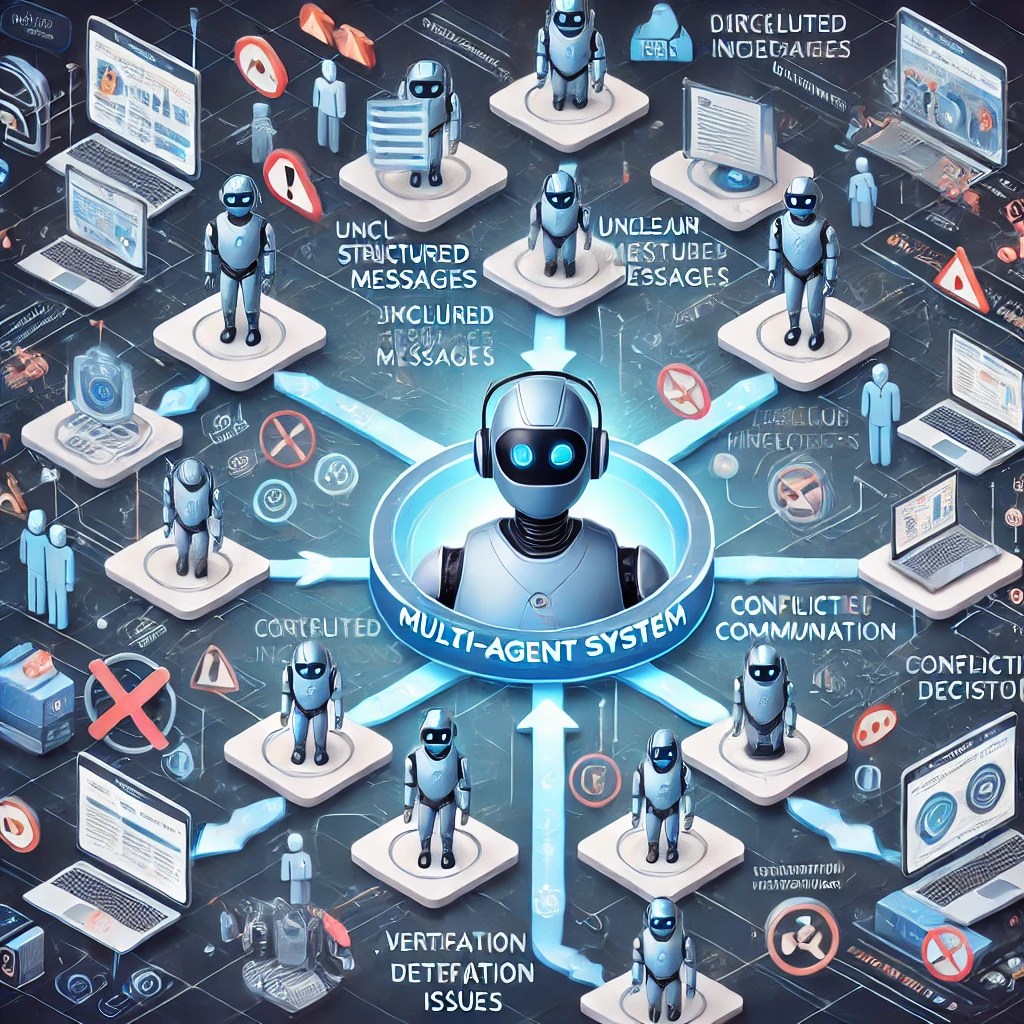

Key Failure Modes in Multi-Agent Systems

The research identifies three primary failure areas:

1. Specification & System Design Failures (37.17%)

- Unclear task instructions and agent roles

- Ineffective conversation management, leading to loss of context

- Agents failing to adhere to predefined task specifications

2. Inter-Agent Misalignment (31.41%)

- Ineffective communication and information withholding

- Conflicting behaviors leading to misalignment

- Failure to seek clarification or incorporate other agents’ input

3. Task Verification & Termination Issues (31.41%)

- Premature termination of processes

- No or incomplete verification mechanisms

- Incorrect validation of task completion

How Can We Address These Failures?

Short-Term (Tactical) Fixes

🛠 Improved Specification & Design

- Define agent roles and responsibilities clearly.

- Use structured prompts and self-verification steps.

- Design conversation frameworks for better coordination.

🔄 Enhancing Inter-Agent Collaboration

- Implement cross-verification mechanisms.

- Use structured conversation patterns to improve teamwork.

- Design modular agent architectures for simplified interactions.

While these fixes have shown measurable improvements (e.g., a +14% accuracy boost in ChatDev), they don’t solve all issues.

Long-Term (Structural) Solutions

✅ Enhanced Verification Mechanisms

- Develop automated unit test generation for MAS domains.

- Implement robust quality assurance frameworks.

🗣 Standardized Communication Protocols

- Move beyond unstructured text-based communication.

- Define clear intentions and structured parameters for inter-agent dialogue.

🎯 Reinforcement Learning for Agent Behavior

- Use role-specific RL algorithms to fine-tune agent actions.

- Encourage task-aligned decision-making and penalize inefficiencies.

🤖 Uncertainty Quantification

- Introduce probabilistic confidence measures for better decision-making.

- Help agents assess when to act vs. when to seek input.

🧠 Improved Memory & State Management

- Enhance long-term context retention.

- Ensure reliable tracking of progress over extended interactions.

The Road Ahead

This study highlights that incremental fixes aren’t enough—MASs need fundamental design improvements. Future research should focus on:

- Better evaluation benchmarks for MAS performance

- Enhanced agent communication models to reduce ambiguity

- Advanced AI techniques for improved collaboration

With an open-sourced dataset and LLM annotator, this research lays a strong foundation for scalable and reliable multi-agent collaboration in AI.

Paper Link: Multi agent systems failure taxonoty

What Do You Think?

What other strategies could improve Multi-Agent LLM Systems? Let’s discuss in the comments!